Quantum computing is no longer being discussed as a standalone machine.

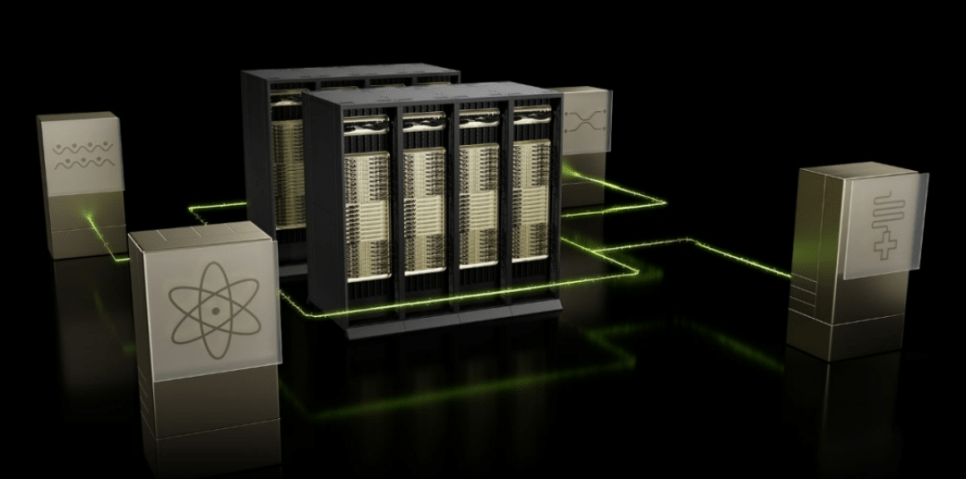

The more realistic picture now is a hybrid architecture in which CPUs, GPUs, and QPUs work together. That is the most important shift in the industry today. Useful quantum computing is not arriving as an isolated box. It is arriving as part of a broader accelerated computing stack, where classical infrastructure handles simulation, orchestration, control, error correction, and data processing around the quantum core. NVIDIA’s recent messaging around CUDA-Q and NVQLink makes that direction especially clear.

1. Useful quantum does not arrive alone

The most important idea in quantum computing right now is hybridization.

A practical quantum system needs much more than a QPU. It needs classical compute to prepare circuits, simulate workloads, process measurement data, decode errors, and feed control signals back into the system. That is why the industry is moving toward hybrid quantum-classical supercomputing rather than pure quantum machines operating in isolation. NVIDIA’s CUDA-Q is explicitly described as a QPU-agnostic platform for accelerated quantum supercomputing, which tells you exactly how the company sees the future architecture.

2. NVIDIA’s quantum strategy has three layers

NVIDIA’s quantum strategy is not about building its own QPU.

It is about owning the infrastructure around the QPU. The three main pillars are CUDA-Q, NVQLink, and the broader classical stack built on Grace, DGX, cuQuantum, cloud infrastructure, and GPU acceleration. CUDA-Q provides the software layer that lets developers orchestrate CPUs, GPUs, and QPUs inside one programming framework. NVQLink is the interconnect layer that links quantum processors to accelerated computing. And the classical stack handles simulation, measurement processing, control calculation, and quantum error correction workloads around the QPU.

The strategic meaning is simple.

NVIDIA is not trying to win by choosing one quantum hardware modality. It is trying to become the common platform that every modality plugs into.

3. The real core is NVQLink

If CUDA-Q is the software layer, NVQLink is the architectural center.

NVIDIA introduced NVQLink as an open architecture that connects quantum processors with NVIDIA GPUs for large-scale hybrid workflows. The company’s public material says NVQLink is designed to support high-throughput, low-latency feedback between quantum and classical systems, which is exactly what real-time measurement interpretation and quantum error-correction loops require. NVIDIA has also highlighted that NVQLink is being exposed through cudaq-realtime, reinforcing its role as a practical interface rather than a conceptual one.

Your draft mentions targets such as 400 Gb/s throughput and sub-4 microsecond latency. I could confirm the existence and purpose of NVQLink from NVIDIA sources, but I did not find a recent primary source in this pass that clearly states both of those exact figures together. For WordPress, I would frame the point this way: NVQLink is designed for the fast feedback loop needed to make hybrid quantum-classical computing practical, rather than locking in those two numbers as hard specs.

4. NVIDIA’s real move is ecosystem capture

NVIDIA is building a platform business around quantum.

The company has been linking CUDA-Q and NVQLink to a broad partner ecosystem rather than committing to one QPU type. KISTI announced in March 2026 that it is building a quantum-HPC hybrid environment with NVIDIA and IonQ, using CUDA-Q and NVQLink. NVIDIA’s public event materials also position the company as working across the broader quantum landscape rather than as a direct QPU manufacturer.

That is the important strategic point.

NVIDIA does not need to manufacture the winning QPU itself. It only needs to make sure that whichever QPU wins, it still runs through CUDA-Q and NVQLink.

5. We are not at full commercialization yet, but the path is visible

Quantum computing is still mostly in the research and early utility stage.

The industry is clearly progressing, but it has not yet reached broad general-purpose commercial deployment. What is happening now is more limited and more concrete: cloud access, experimental hybrid systems, better qubit quality, stronger benchmarking, and selected application trials in optimization, chemistry, and simulation. NVIDIA’s own Quantum Day material frames the field as being at an inflection point rather than at full maturity, which is the right way to describe it.

So the better roadmap is not “commercialized versus not commercialized.”

It is research → hybrid utility → domain-specific advantage → broader commercial deployment.

6. The key question is not who has the coolest machine, but who solves useful problems

This is the right way to think about the sector.

The quantum industry is moving away from headline qubit counts as the only story. Increasingly, the real question is whether a machine can execute useful circuits at meaningful width and depth with enough fidelity to solve an economically relevant task. Broad benchmarking reviews published in 2026 make exactly this point: performance measurement is becoming more nuanced, and the industry still lacks one universally accepted standard.

Your draft uses the term QUOPS. I could not verify QUOPS as a broadly adopted industry-standard benchmark from primary sources in this pass, so for a publishable blog post I would soften that to: the market is increasingly focused on benchmarks that reflect useful circuit execution, not just raw qubit counts. That keeps the argument strong without overstating one metric.

7. The real value of quantum is solving specific problem classes

Quantum computing matters because of where it might be useful, not because it is exotic.

The strongest candidate use cases remain financial risk analysis and portfolio optimization, drug discovery and molecular simulation, logistics and scheduling, materials and energy research, and selected machine-learning or combinatorial workloads. BASF explicitly says it is developing and implementing quantum algorithms for industrially relevant use cases, and D-Wave highlights live applications across logistics, manufacturing, mobility, supply chain, and financial services.

That is why the market is shifting.

The real value of quantum will not come from general-purpose consumer computing. It will come from high-value, narrow problem classes where even a partial computational edge matters.

8. IonQ: stable, accessible, and increasingly integrated into hybrid infrastructure

IonQ remains one of the clearest pure-play names in gate-based quantum.

Its pitch centers on accessibility, fidelity, and algorithmic performance rather than raw physical qubit count alone. IonQ’s Tempo system is designed around 100 qubits and #AQ 64, and the company says it is targeting commercial-advantage-capable workloads. In Korea, KISTI also announced that IonQ’s next-generation Tempo system will be linked with KISTI’s supercomputing environment through CUDA-Q and NVQLink, making IonQ one of the most visible participants in Korea’s emerging quantum-HPC stack.

That makes IonQ’s story relatively straightforward.

It is one of the better-positioned companies if the market values cloud access, hybrid integration, and near-term practical usability over pure speculative scale claims.

9. Rigetti: superconducting, modular, and focused on on-prem and system integration

Rigetti is a very different case.

It is built around superconducting qubits, faster gate operations, and increasingly a modular chiplet architecture. In recent official updates, Rigetti highlighted its 9-qubit Novera system and its Cepheus family, moving from 36 qubits to 108 qubits, with the 108-qubit system now generally available. Company filings and press releases also describe Rigetti as selling on-premises systems from 9 qubits up to 108 qubits, with upgrade paths and modular architecture at the center of the roadmap.

So Rigetti’s investment logic is not the same as IonQ’s.

It is more exposed to superconducting architecture, on-prem infrastructure, and modular scaling inside hybrid quantum-classical environments.

10. D-Wave: not universal first, but possibly commercial first in certain niches

D-Wave should be understood differently from IonQ and Rigetti.

Its strength is not broad gate-based universal computation. It is annealing-based quantum computing focused on optimization-heavy use cases. That makes it more specialized, but in some commercial areas it can also make the story more immediate. D-Wave’s own materials emphasize use cases in logistics, manufacturing, mobility, scheduling, supply chains, and finance, while BASF publicly discusses quantum work for industrially relevant applications.

That is why D-Wave often looks more practical in near-term enterprise deployment discussions.

It is not necessarily the path to universal quantum computing first. But it may be one of the faster paths to commercial optimization use cases.

11. So what is the real investment conclusion?

The most important conclusion is this:

the quantum race is no longer only about who builds the best QPU.

It is increasingly about who owns the hybrid stack, who can prove useful application performance, and who gets embedded into the workflows where quantum and classical computing meet. NVIDIA is trying to own the platform. IonQ is trying to lead on accessible high-performance trapped-ion systems. Rigetti is pushing modular superconducting systems. D-Wave is leaning into optimization-heavy commercial niches. Those are very different investment stories, even though they all sit under the quantum label.

That is why the next phase of quantum investing will likely reward discrimination, not enthusiasm.

The winners may not be the companies with the loudest qubit count, but the companies that can actually fit into a useful hybrid compute architecture and solve valuable problems.

댓글 남기기